Simula 67 introduced object inheritance in 1967. Thus has born late binding: the program does not know which service implementation on an object is invoked until runtime. Inheritance turned out pretty useful. In the late 1990s inheritance evolved into a preference for interface inheritance rather than implementation inheritance and we now live with the mantra of "composition over inheritance" for software design. Nevertheless, polymorphism lives on in most newer languages, with Joe Armstrong's Why OO Sucks rant an entertaining and notable exception from the creator of Erlang. By the way, besides inheritance, Simula 67 also introduced objects, classes, subclasses, virtual methods, coroutines, and discrete event simulation. The '60s were wild.

In 1980 Smalltalk introduced the doesNotUnderstand: message. In many programming languages, some sort of MethodMissing exception occurs when you invoke a service on an object that does not exist. In Smalltalk, instead of failing, the VM sends the doesNotUnderstand: message to the object along with the selector of the message and its arguments. If you want, you can implement any arbitrary logic you want within the message handler, and the callers don't know any different that this particular message wasn't part of your declared API. Thus was born even later binding: the program does not know which services an object provides until runtime.

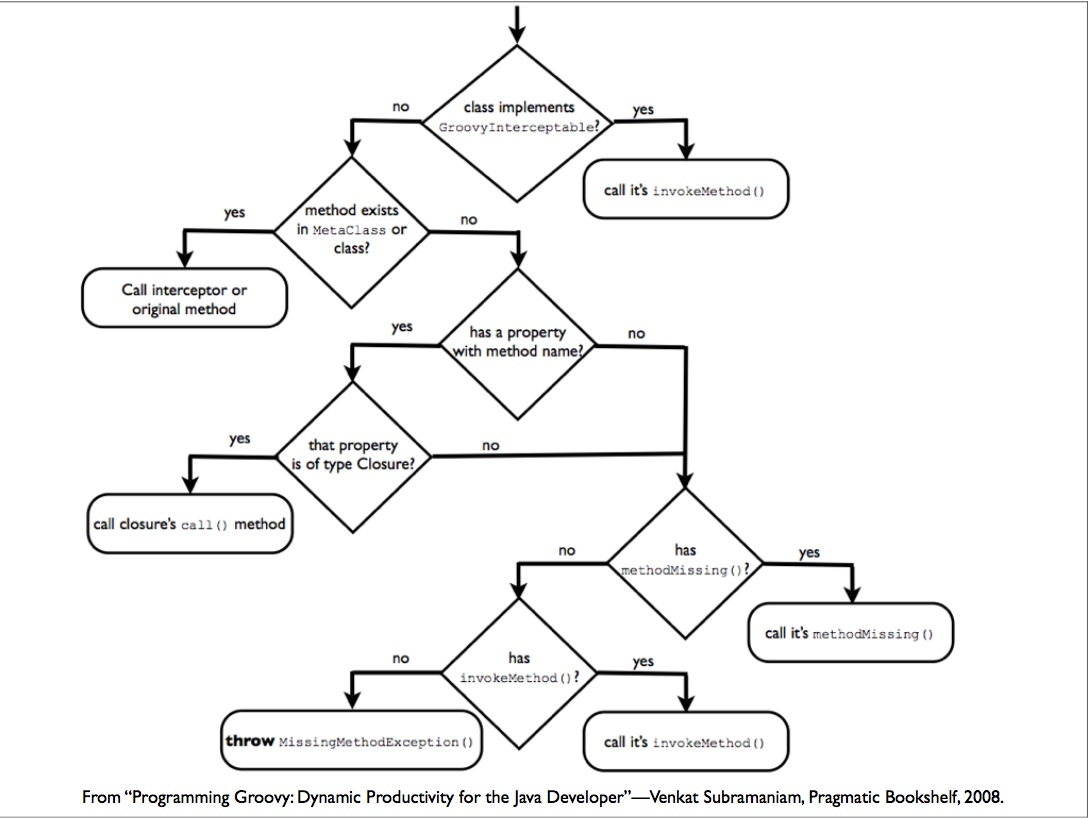

Sometime after its first release in 2003, Groovy introduced invokeMethod and getProperty. In a language built around the idea of messages, like Smalltalk is, the doesNotUnderstand: message is a simple way to expose method selection to the user. The VM doesn't locate the method for you, it simply tells you what was asked to be invoked and then provides you with easy hooks to respond. The Java platform is different. The compiler does some of this work for you and the runtime does the rest. The method resolution rules aren't simple, and the result of finding a method to invoke is currently baked into the class' bytecode (invokeDynamic is coming though). Groovy works around this with the invokeMethod and invokeProperty hooks. Any object in the Groovy platform can override these methods to provide runtime service creation. Your object doesn't have a findByCityAndState(String, String) method? No problem, override invokeMethod and provide one at runtime. (Note, GORM actually uses ExpandoMetaclass for dynamic finders but the intent is the same). Groovy wrote their own runtime method dispatch algorithm that sits on top of that of Java's, and they let you hook into it. I mentioned the concept was simple in Smalltalk... here is a diagram of the Groovy dispatch logic courtesy of Venkat Subramaniam. Simple? I say no. Is it the same way methods are dispatched as in Rhino, JRuby, or Jython, all dynamic languages on the JVM? No. A lot of coding in dynamic JVM languages occurs in a mixed environment where Java and dynamic code coexists in the same process. What happens when Java invokes findByCityAndState? Trick question, it can't be done. Synthesized methods within invokeMethod do not appear in the public API of the class bytecode, so the Java compiler can't even see the method and won't even compile. Even if the method were in the class, the Java code would call straight into the method implementation and avoid all the indirection. What happens when a Jython script calls into a Groovy script? Again, the call would be filtered through the Java layer so no cross language metaprogramming would be possible unless a custom Groovy-aware adapter were written that replicated the Groovy method dispatch.

Simple? I say no. Is it the same way methods are dispatched as in Rhino, JRuby, or Jython, all dynamic languages on the JVM? No. A lot of coding in dynamic JVM languages occurs in a mixed environment where Java and dynamic code coexists in the same process. What happens when Java invokes findByCityAndState? Trick question, it can't be done. Synthesized methods within invokeMethod do not appear in the public API of the class bytecode, so the Java compiler can't even see the method and won't even compile. Even if the method were in the class, the Java code would call straight into the method implementation and avoid all the indirection. What happens when a Jython script calls into a Groovy script? Again, the call would be filtered through the Java layer so no cross language metaprogramming would be possible unless a custom Groovy-aware adapter were written that replicated the Groovy method dispatch.

Clearly, users want control over how services on objects are located, and a cross language solution would be best. Enter 2007 and Atilla Szegedi's JVM Dynamic Languages Metaobject Protocol Library. Instead of baking method dispatch into the language runtime, it is externalized into a MetaObjectProtocol. So in Rhino, the JVM JavaScript engine, instead of interacting with the Scriptable object to get a property like this:

((Scriptable)obj).get("foo");You'd interact with the MOP, like this:

metaobjectProtocol.get(obj, "foo");I believe the IRubyObject serves the same purpose in JRuby. In fact, most languages have a concept of a parent adapter class that would get replaced by the MOP, which Atilla calls a "Navigator" instead of the adapter. The power of this is a universal invocation interface. Cross language compatibility is ensured by writing method invocations through the MOP, and each language would come with its own implementation of the MOP.

The coolness of this is the composibility of the MOPs. The composite runtime MOP might be composed of a Groovy MOP, a JRuby MOP, and a Java MOP. When a method is invoked, the chain of MOPs is consulted. If the first MOP can handle the invocation then it does so, but if it cannot then it replies with "No Authority" and the next MOP in the chain is called. The CompositeMetaobjectProtocol class is just the plain old Composite Pattern. Who says design patterns are dead? And while each language would have its own MOP, you could certainly have your own for your library or framework. This puts the method resolution algorithm in the hands of the user.

The history of programming languages doesn't seem to be about making the right fundamental decisions and having further work build on it. It seems to be about finding ways to safely put more and more flexibility in the hands of the programmers. I relish the idea of one day being able to safely modify method invocation in a language, and the Dynamic MOP seems like a good step forward.

1 comment:

Thanks for the interesting talk at the Twin Cities Languages User Group!

Post a Comment